In other words, it gives users the data and logic but they have to put them together to see the whole, rendered web page.Īn example of such a page would be as simple as: Ĭontent: "Available 2024 on scrapfly.io, maybe."ĭocument. Why can't my scraper see the data I see in the web browser? On the left we see what the browser sees on the right is our http webscraper - where did everything go?ĭynamic pages use complex javascript-powered web technologies that unload processing to the client. One of the most commonly encountered web scraping issues is: What are existing available tools and how to use them? And what are some common challenges, tips and shortcuts when it comes to scraping using web browsers.

In this tutorial, we'll take a look at how can we use headless browsers to scrape data from dynamic web pages.

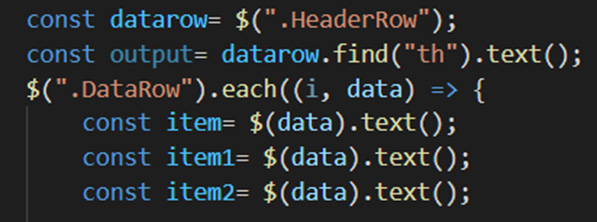

In this article, we understood how we will scrape data using Nodejs & Puppeteer no matter the sort of website.Many modern websites in 2023 rely heavily on javascript to render interactive data using frameworks such as React, Angular, Vue.js and so on which makes web scraping a challenge. You should see the title and price of the chosen book returned to the screen Conclusion We can test the above code by adding a line of code to the scrape function. it’ll become clearer as we continue with the tutorial. It’s okay if none of this is sensible immediately. Since we’re using an async function, we will use the await expression which can pause the function execution and await the Promise to resolve before moving on. So we will also add some features to help in that regard. However, before you start scraping, there are a few requirements that you must have. With these tools we can log into sites, click, scroll, execute JavaScript and more. How To Crawl And Scrape Websites In JavaScript Web scraping and crawling will be easy with this beginner-friendly guide. Avoiding blocks is an essential part of website scraping. In this article, you will learn how to crawl and scrape websites in JavaScript using Node.js. We will combine them to build a scraper and crawler from scratch using Javascript in NodeJS. For example, a common tool used in web scraping is Js2Py which can be used to execute javascript in python. That being said, there's a lot of space in the middle for niche, creative solutions. When the async function finally returns a worth, the Promise will resolve. Javascript and web scraping are both on the rise. When it comes to using python in web scraping dynamic content we have two solutions: reverse engineer the website's behavior or use browser automation. Because this function is asynchronous, when it’s called it returns a Promise. Many modern websites in 2023 rely heavily on javascript to render interactive data using frameworks such as React, Angular, Vue.js and so on which makes web scraping a challenge. Something important to notice is that our scrape() function is an async function and makes use of the new ES 2017 async/await features. Now, insert the subsequent boilerplate code in xyz.js const puppeteer = require(‘puppeteer’) Now, create a file inside that folder by any name you wish. For creating a folder and installing libraries type below given commands. Just create a folder and install puppeteer. Which may be a fake bookstore specifically found out to assist people to practice scraping. We are getting Scrapbook prices and titles from this website.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed